The Destabilizing Reality of AI and Forced Mass Adaption

A conversation keeps finding me– with friends, colleagues, neighbors and strangers– and when that happens, I know a societal trend has moved beyond media talking points and into reality. I track many issues across what’s left of the “viable” media landscape and the destabilization of AI across multiple demographics sits atop my radar.

Let’s be blunt here. AI is a destabilizing technology. It was violently forced onto society without consent. Period. End of story. The backlash against AI is here, it's growing and justified.

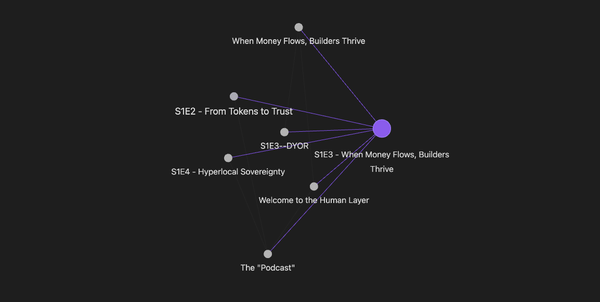

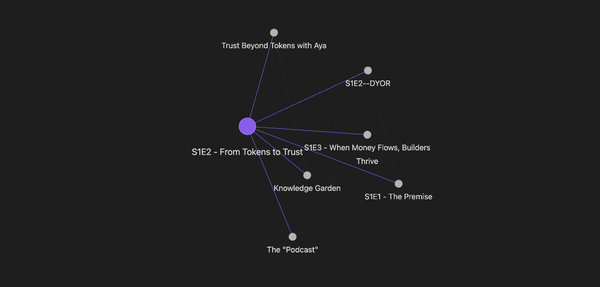

If we’re going to survive this age of forced AI adoption, because there’s no way to put the AI genie back in the bottle, we need to understand what is happening and put our fear in the back seat long enough to make peace with AI's role in our lives. We also must determine our individual path with AI and preserve our own sovereignty and humanity at the same time. (We talked about this recently on The Human Layer)

To clarify, I’m a “high tenure” user of AI and have been for 3 years now. I began learning AI because it scared the shit out of me and I could see the cycle of disruption at the hands of tech happening again, a cycle I’d been through many times as a journalist, producer and most recently as a knowledge worker.

An emergent technology usually surfaces in labs, hackerspaces and def/dev cons and makes a buzz, but stays underground. When it finally has enough momentum/scale, it surfaces into the mainstream containers and can destabilize entire industries and professional trajectories.

I’ve lived through this at least 4 times. The first time I got burned, hard. Transitioning from analog film photojournalism to digital photographer/multimedia producer almost cost me my business because I was stubborn and elitist about film. I learned the hard way not to ignore destabilizing technologies.

AI is different. We have to acknowledge an entire industry and culture of technocratic broligarchs that are driving forced adoption to truly unpack and understand what’s happening. On the highest and most abstract levels, the CEOs of a handful of AI frontier models are playing God with technology and the economy. The reality that a handful of tech bros possess such power is a gross indictment on our dysfunctional political infrastructure and the economy itself.

But, that’s how late stage capitalism works– purchase a few politicians, overwhelm them with tech specs they’re too old to understand, scare the crap out of them before an election and ram through a technology designed to upend all traditional labor and economic structures while creating a circular economic structure of chaos for you and your Silicon Valley buddies which then concentrate wealth and power and the circle keeps spinning. Until it stops.

Let’s roll it back to the local level because there’s little we can do with what I stated above. That reality is locked into structures that need serious dismantling, but that’s beyond our control. I want to focus on the destabilization happening at the org and individual level so we can have a healthy conversation about what forced adoption actually looks like at scale.

In crypto-land, where I’ve spent way too much of the last decade, we focused hard on the “mass adoption” dilemma. Our tech was and still is too complex for mass adoption. And honestly, after seeing what AI is doing at scale, that failure of mass adoption might be a good thing.

With AI, there’s no friction for users. My 85 year old neighbor can use AI to find an answer and never have to learn a thing. It just exists now in his phone, browsers and computers. He barely knows the difference between a search engine and an AI model because he doesn’t have to. In crypto, to fully use it as designed, you must understand self-custody and take full responsibility of your tech and how you use it and your money is on the line. That requires a long onboarding process, constant technical learning of new advances and staying actively engaged with the tech and the industry itself. Its sovereign by design.

Because there is no user friction, everyone can just, well– use it. And the tech oligarchs know full well that their algorithms have trained billions of people to just consume the information their devices feeds them without questioning the source, provenance or validity of the output. Just input, output and consumption. On repeat. At scale.

Society is conditioned to click, scroll and consume while never questioning the technological infrastructure allowing them to bypass at scale.

Many sacrificed autonomy for convenience and now everyone must suffer the consequences.

Merge that reality with the fact that most business owners, leaders and executives see AI through a lens of egoic distortion. CEOs can now use AI to bring ideas to life at scale and fast AF. But when done without deliberation or intent, the CEO is now spinning Chaos faster than anyone on their team can adjust and absorb. And the executives are also declaring that their entire org structure should change, AI (or the excuse of AI) can eliminate an entire class of management workers from their operating budgets and they can reallocate or increase profits with the savings. So, the executives then declare that everyone in the company must use AI and increase productivity in unnatural and unnecessary ways.

Executives across the board are pushing the emotional labor of AI adoption onto the laborers themselves without any tools, support or empathy. Literally how patriarchal leadership works at the highest levels and baked into probably 95% of corporations or organizational structures.

And here’s where the destabilization truly kicks in for our scenario. Middle management is now on the chopping block– and they know it. An entire class of labor, the knowledge working leadership layer who works with the humans of the org to meet KPIs and OKRs are now deemed optional. We can debate the validity of their roles in another conversation. The reality that a large portion of our consumer economy is propped up by this management layer and their paychecks is non-negotiable.

Management is now optional in the eyes of the CFO who’s looking at their org chart and fever-dreaming about massive salary reductions and savings on health care. Optional in the eyes of the CEO who is also fever-dreaming about the explosive growth his org can undergo to move the company towards some wild ass goal that AI now makes possible on paper. Or maybe he's just securing his golden parachute by producing a profit increase of 500%.

And here's the story so many are living in real time. The manager sits on a leadership call where the CFO announces a re-org coming next quarter, the CEO declares the company will be “AI native” or “AI driven” by next quarter to increase output at scale, the CTO declares that every manager’s team should show X% of token usage each week to justify staying employed at the company and the CMO says they'll increase content output volume by X% to increase conversions by X amount. The head of HR states that the next round of performance reviews for management and their teams will include individual and team token use metrics and the org will place under-performers on PIPs or withhold promotions/bonuses/raises.

The manager leaves the meeting in terror. Everything he was told to do in his career's trajectory to this point just got tossed out the window. His team is knowledge based, so AI workflows are not intuitive like they are for engineers or devs. Their team is still deploying marketing, communications or operations workflows for a world that no longer exists and changing that reality is not under the manager’s control. His orders come from the CMO, who just created a non-negotiable mandate for AI slop at scale.

The manager opens his phone after the meeting, still trying to process the new AI mandate that his team has no tools or skills to implement by next quarter and then he sees the headlines that 3 tech companies just like his eliminated management layers outright and declared the company AI native. The manager then sees a text from his wife requesting he pick up diapers after work for his new baby girl. His heart rate is through the roof, cortisol is gushing and his brain is doing the math on paying for an infant with no health insurance and no paycheck.

Our now completely destabilized manager rolls into the next meeting with his team carrying volatile, identity-level fear. Unable to process what’s happening in real time, he just declares that his team must start using AI in all of their workflows, they need to figure it out as soon as possible and he’ll start enforcing that arbitrary rule in two weeks. The manager is in a death spiral of sheer panic because he knows the demands are not possible. He also doesn't possess the leadership skills for this type of transition at scale. Most managers don’t have a chaos engineering skillset or the regenerative leadership skills necessary to carry teams through such a deep transition. Patriarchal leadership 101 doesn’t prepare managers for this type of work.

AI just destabilized this manager's foundational identity and reality. He is now in a spiral of chaos that will end with his job next quarter and might eliminate most of his team because there is no possible way to achieve the new company metrics by next quarter.

Side note: I just explained why "token-maxxing" is a thing now and how its causing internal chaos and costing a fortune inside large corporations. A leaderboard based on token use incentivizes manic behaviors to produce volume, not strategic use-cases or efficient outputs.

Now, let’s look at the team itself. Let’s say our manager has 3 people on his team that are younger, a bit more AI fluent and are thinking, “hell yea– work is paying for me to go fully AI native”. They install cowork on their computers, give it MCP access to everything (because the manager never taught them HOW to use AI, just that they had to use AI).

Our ambitious workers then begin jamming AI into every possible workflow they can find and eat through cowork tokens like its a Pac Man game circa 1985. Eat all the tokens as fast as possible and level up (while also creating security breeches that CTO will spend a fortune fixing in Q4). Our young workers feel empowered and God-like. They’re producing work and workflows that are super-human, with strategies and finished content that reinforces their felt brilliance.

After a few weeks of these manic cycles of endless content spinning and non-human workflows, the workers are exhausted and completely useless. The manic behaviors that AI is designed to promote and propel just landed these workers in a manic phase of destabilization. They need a mental health intervention, but no one can identify what is actually happening. AI just placed them in a manic creation phase for 2 weeks and their nervous systems completely collapsed.

On one team of 25 people, the leader is completely destabilized because their identity and livelihood are now in question and will probably be lost in a matter of months. The young laborers are now completely destabilized because they did what the manager dictated without any guidance or grounding from their leaders or peers and blew their cognitive load on a manic creation sprint leaving them in a precarious mental state that may or may not resolve without intervention.

Now you have the remaining 21 people on that team, probably a mix of senior and junior level knowledge workers who are watching all of this thinking--

- A: fuck AI its dangerous and stupid,

- B: I don’t even know what it means to “apply AI” to my very necessary and human-centric role

- C: my boss gave me zero tools to learn AI in our specific context

- D: how am I going to navigate this atrocious job market when I get fired next quarter because my boss is incompetent?

Toss in the current headlines for some anxiety sprinkles; broken health care system, gas shortages in a few weeks, politicians and nation states using the same tech to bomb schools, kidnap their neighbors, create surveillance dragnets never seen before, gamble on war crimes and launder money openly for corrupt politicians. We're looking at an absolute recipe for societal level destabilization and disaster by an emergent technology.

We're all living through a mass destabilization event across an entire society that remains unspoken and unseen because we barely have language on how to use AI, much less clear terminology and protocols for helping people and society restore their destabilized nervous systems. The impacts can destroy relationships, families, communities and entire ecosystems of humans.

Here’s the cold hard truth about all of this--

- yes it’s happening, no you can’t avoid learning AI if you still put food on the table with screens/knowledge,

- yes AI is violent and violating, no you can’t avoid it if you need to make money to stay housed,

- yes there are healthy ways to use AI and they co-exist with unhealthy ways

- yes there are ways to preserve your cognitive abilities while still using AI, no your children should not be using AI (and parents-- that's on you),

- yes you can make your workday more efficient and reclaim some time to go touch grass by deploying AI, no your boss isn't going to tell you that part,

- yes you can change your life by sprinkling in a bit of creative AI use -- on your own terms and with intention,

- and no AI is not a fad or going away, probably ever.

The truth about AI is that we must hold the promise and the peril of this technology AT THE SAME TIME as we navigate forced mass adoption– as labor, as leaders, in community and as a society.

And sure, many pundits and “influencers” are drawing lines in the sand that say “I will never use AI because its brain rot and people who use it are lazy, shitty grifters”. Guess what, you’re part of the problem too. People said the same thing about the damn internet– you know, that thing you use to promote your books and lock your articles behind paywalls. I truly wish those declarations came with the acknowledgment of privilege that allows someone to avoid AI use entirely.

The rest of us, the ones actively in the labor force navigating these impossible situations of forced AI adoption, are learning to use this technology because we are staring down our literal material survival. Period.

I say this while holding the paradox of AI itself, it is a transformational technology and if one can find the time and tooling to learn it ON THEIR OWN TERMS, they can apply it to all situations where it would make life easier, more efficient and possibly life-changing. I’ve experienced all three and so have many of my colleagues who chose to adopt AI workflows early on so they could navigate reality.

If you're still with me and still harbor hope, here's some possible solves. My colleagues and I are working on these solves at scale through our 501c3 designed to support and educate journalists and indie media through emergent technologies. Check back here in a few weeks or follow me on LinkedIn.

Taylor and I also built commsOS methodology as a teaching tool for AI workflows, on an individual and org level. We'll have workshops soon and our methodology is free to learn on your own– open sourced for everyone to begin establishing a relationship with AI on their own terms. Our methodology is also under the stewardship of Factland 501c3 where we'll be teaching others to reskill.

Grab a friend or bestie and build a creative project together using AI. That's how Taylor and I built The Human Layer and CommsOS. Co-creation allowed us to learn together and in a way that was very human and balanced. We hold the peril and the promise of this tech in how we work with it and have baked grounding and care into our processes with this tooling as a team of two.

Start with a shared interest or passion (food, sports, healing, yoga, movies, book clubs, writing, etc), then build your own soloOS together. Make it fun. Make a mess. Learn how the tools work so when you need to use them on the fly, the fear has been replaced with experience and understanding.

And stay tuned, our workshops through Factland 501c3 will be launching in the coming weeks and will be run by veteran journalists and academics who are experts in emergent technologies and have been teaching adoption to non-technical learners for a decade.

This article is organic– no AI touched it at any point of creation or editing. But, if it feels a bit like a machine, that's because I use AI as a comms professional serving my clients at scale and making my way through the destruction of all of my industries. And I use it too often.